With that said, a deep learning model would require more data points to improve its accuracy, whereas a machine learning model relies on less data given the underlying data structure. We could group pictures of different items into their respective categories based on the similarities or differences identified in the images. text, images), and it can automatically determine the set of features which distinguish one item from one another.īy observing patterns in the data, a deep learning model can cluster inputs appropriately.

It can ingest unstructured data in its raw form (e.g. “Deep” machine learning can leverage labeled datasets, also known as supervised learning, to inform its algorithm, but it doesn’t necessarily require a labeled dataset.

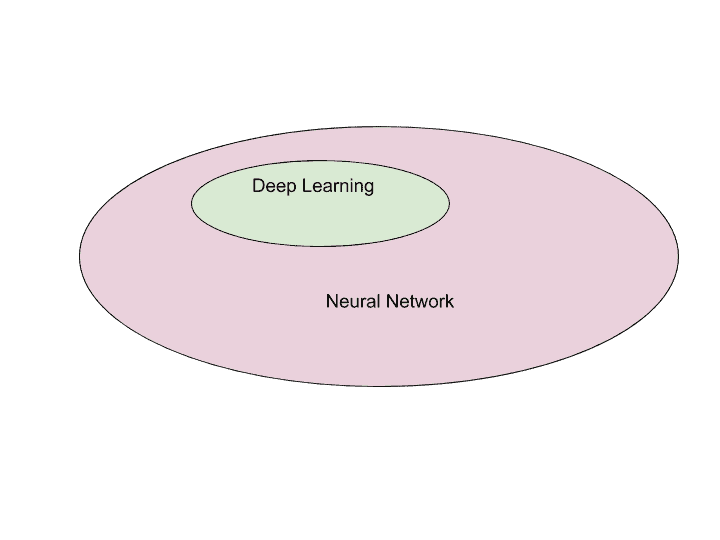

Human experts determine the hierarchy of features to understand the differences between data inputs, usually requiring more structured data to learn. This capability will be particularly interesting as we begin to explore the use of unstructured data more, particularly since 80-90% of an organization’s data is estimated to be unstructured.Ĭlassical, or “non-deep”, machine learning is more dependent on human intervention to learn. It also enables the use of large data sets. Deep learning automates much of the feature extraction piece of the process, eliminating some of the manual human intervention required. The primary difference is how each algorithm learns and how much data each type of algorithm uses. Since the output of one layer is passed into the next layer of the network, a single change can have a cascading effect on the other neurons in the network.ĭeep learning can be understood as merely a subset of machine learning. However, this case is different with neural networks. In regression, you can change a weight without affecting the other inputs in a function. The main difference between regression and a neural network is the impact of change on a single weight. The above example is just the most basic example of a neural network most real-world examples are nonlinear and far more complex. When all the outputs from the hidden layers are generated, then they are used as inputs to calculate the final output of the neural network. Each hidden layer has its own activation function, potentially passing information from the previous layer into the next one. Now, imagine the above process being repeated multiple times for a single decision as neural networks tend to have multiple “hidden” layers as part of deep learning algorithms. Otherwise, no data is passed along to the next layer of the network. If the output of any individual node is above the specified threshold value, that node is activated, and data is sent to the next layer of the network. Since Y-hat is 2, the output from the activation function will be 1, meaning that we will order pizza. Y-hat (our predicted outcome) = Decide to order pizza or not Since we established all the relevant values for our summation, we can now plug them into this formula. W3 = 2, since you’ve got money in the bankįinally, we’ll also assume a threshold value of 5, which would translate to a bias value of –5.W2 = 3, since you value staying in shape.Larger weights means that a single input’s contribution to the output more significant compared to other inputs. Now we need to assign some weights to determine importance. However, summarizing in this way will help you understand the underlying math at play here. This distinction is important since most real-world problems are nonlinear, so we need values which reduce how much influence any single input can have on the outcome. This technically defines it as a perceptron as neural networks primarily leverage sigmoid neurons, which represent values from negative infinity to positive infinity. X3 = 1, since we’re only getting 2 slicesįor simplicity purposes, our inputs will have a binary value of 0 or 1.X2= 0, since we’re getting ALL the toppings.Then, let’s assume the following, giving us the following inputs: If you will lose weight by ordering a pizza (Yes: 1 No: 0).If you will save time by ordering out (Yes: 1 No: 0).Let’s assume that there are three main factors that will influence your decision: This will be our predicted outcome, or y-hat. Graph attention network is a combination of a graph neural network and an attention layer.From there, let’s apply it to a more tangible example, like whether or not you should order a pizza for dinner. The graph attention network (GAT) was introduced by Petar Veličković et al. ( 1 ), i.e., by setting each nonzero entry in the adjacency matrix equal to the weight of the corresponding edge. Basic building blocks of a graph neural network (GNN). A graph neural network ( GNN) is a class of artificial neural networks for processing data that can be represented as graphs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed